Fake Employees: The Rise of Deepfake Job Applicants in Hiring

Modern hiring is facing a new type of fraudster: candidates who don’t actually exist. Powered by deepfakes and synthetic identities created using advanced AI technology, these fake applicants slip through interviews and background checks only to infiltrate companies under false pretenses. In an era of remote work and global recruiting, this threat has moved from science fiction to alarming reality. Multiple organizations – including tech companies and government agencies – have already reported incidents of fraudulent hires using AI-altered faces, voices, and documents. The implications are stark: if a “deepfake employee” can talk their way into your workforce, they can access sensitive data, steal assets, or even undermine regulatory compliance. This article explores how deepfake job applicants operate, including how attackers fabricate convincing human personas by creating deepfakes, why they’re evading traditional hiring checks, and what businesses must do to defend against this emergent risk.

The New Hiring Threat: Deepfake Candidates Are Real

Not long ago, the idea of onboarding a completely synthetic employee felt far-fetched. Today it’s a genuine threat. Law enforcement and cybersecurity experts are sounding the alarm that deepfake job applicants are actively targeting businesses. In June 2022, the FBI’s Internet Crime Complaint Center warned of a surge in complaints about criminals using deepfake videos and stolen identities to apply for remote tech jobs. These aren’t just pranksters – some cases are tied to organized cybercrime and even state-backed operations. For example, one high-profile scheme involved North Korean operatives using AI-altered profile photos and stolen personal data to secure IT positions in U.S. companies. Over several years they collectively earned tens of millions of dollars in salary, even stealing source code and extorting employers, all while funneling proceeds to the North Korean regime. Increasingly, AI-altered faces, voices, and documents—forms of manipulated content—are being used to deceive employers and complicate verification processes.

Remote work has widened the attack surface dramatically. When hiring managers never meet candidates in person, it’s easier for bad actors to misrepresent themselves. Video calls, online forms, and emailed documents create a degree of separation that imposters exploit. COVID-19 normalized virtual hiring, and threat actors have capitalized on that shift. As one security expert put it, “Remote work didn’t create this problem, but it made it scalable. Generative AI finished the job.” Attackers can now fabricate convincing human personas at low cost and high speed, assembling in hours what used to take months of social engineering. Fraudsters are also generating new identities using deepfake technology to bypass traditional hiring checks, including creating fake documents and impersonating real individuals. The result? A wave of fake candidates blending into real applicant pools. In fact, Gartner research predicts that by 2028 one in four job applicants will be fake. Hiring teams are already seeing the red flags: HR professionals have caught candidates with face-swapped videos, voices that don’t match their lip movements, and perfect resumes that later prove to be fabrications. The threat is no longer hypothetical – it’s here now, and businesses must adapt before an invisible impostor becomes their next insider threat.

What a Deepfake Job Applicant Looks Like in 2026

Deepfake candidates use a mix of AI-driven tricks to create a believable but fictitious persona. By 2026, their toolkits have grown sophisticated. Here’s how they build a fake applicant from the ground up:

- AI-Generated Photos and Profiles: Using generative adversarial networks, scammers create realistic headshots for profile pictures that don’t correspond to any real person. These polished photos get paired with fabricated names and work histories on LinkedIn and resumes. (It’s easier than ever to generate a professional-looking headshot and bio with AI.) Cybercriminals even set up entire fake LinkedIn profiles and online footprints. In one case, thousands of bot-made profiles on LinkedIn posed as executives at real companies, fooling even seasoned professionals and recruiters. The fake personas look “lived-in” online, complete with endorsements and past roles, making it hard to tell they’re completely made-up.

- Deepfake Video Interviews: The hallmark of these scams is using AI to masquerade in live video calls. Fraudsters apply real-time deepfake filters or face-swapping software so that the person on camera appears as someone else – often matching the stolen or AI-generated photo. Deepfake technology can manipulate a person's face in real time, altering facial expressions, mouth movements, and even eye contact to create a convincing illusion. On the surface, the interviewee looks and sounds normal, but subtle cues can give them away. The FBI noted instances where the candidate’s lip movements and facial expressions didn’t perfectly sync with the audio, or they’d “sneeze” without any sound. In a recorded incident that went viral in 2025, a hiring manager at a security firm suspected an interviewee was using a face filter. He asked the candidate to cover their face with a hand – an impromptu liveness test. The person refused, knowing it would “break” the fake overlay, effectively exposing the deepfake. This kind of AI face mask can be startlingly convincing until it slips. Recent advancements now allow deepfake technology to manipulate the entire body, not just the face, enabling full-body animations and making fake applicants even harder to detect during video interviews.

- Voice Cloning and Pre-Recorded Answers: Audio deepfakes add another layer. Scammers use AI voice cloning to match the fake persona, ensuring the voice on phone screens aligns with the claimed identity. Others take a simpler route: they play pre-recorded interview responses or scripted answers triggered on cue. In some reports, companies have caught candidates whose audio seemed out of phase with their video, suggesting a manipulated or pre-recorded track was being used. Voice spoofing tools can mimic accents and tone, helping fraudsters pass phone screenings or one-way video interviews where applicants record answers. The goal is to minimize live improvisation so the “applicant” can’t be caught off guard.

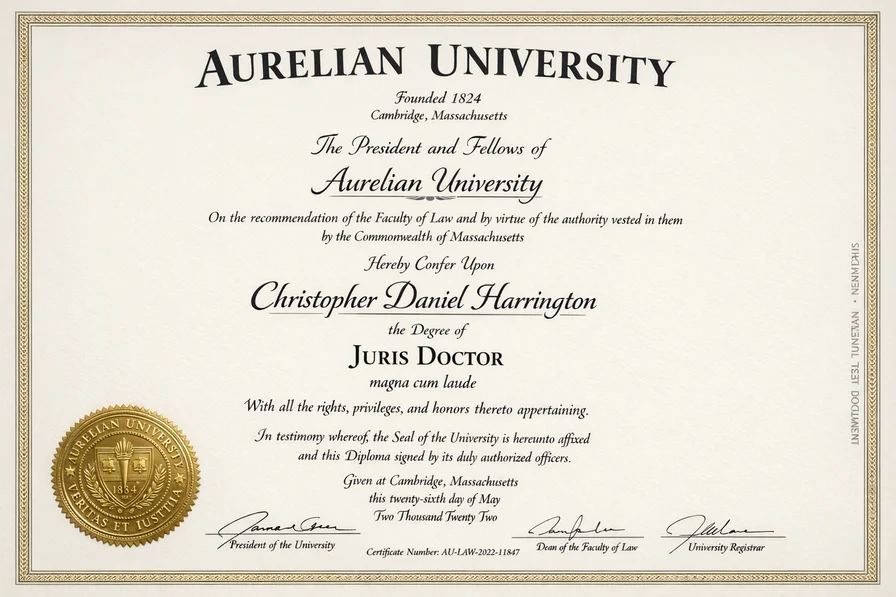

A Fake University Diploma Created Fully By AI. Forged Documents and Credentials: Deepfake applicants backstop their identity with fabricated paperwork. AI-powered document forgers can produce fake government IDs, diplomas, certifications, even pay stubs and tax forms that look authentic. Instead of Photoshop hack jobs, these are often synthetic IDs generated from scratch with photorealistic precision – correct fonts, holograms, watermarks and all. For instance, an imposter might submit an AI-generated driver’s license and social security number to satisfy background checks, often using stolen personal data (PII) from real people to concoct a “Frankenstein” identity. The FBI noted many cases of fraudsters using stolen names, addresses, and SSNs on applications; only later did background checks reveal the PII belonged to someone else. By blending real data with fake documents, scammers create synthetic identities that can slip past cursory manual review.

- Fabricated Work Histories and References: To pad their resume, these fake candidates invent past employment at companies that either don’t exist or that they themselves set up. It’s not uncommon for fraudsters to register sham businesses or websites to serve as fake “previous employers.” They can list a credible-sounding company on their CV and even have collaborators pose as references on phone calls. In some cases, entire “reference farms” exist where hired individuals vouch for the fake applicant with glowing reviews. Online, they might maintain a web of deceit: LinkedIn pages for fake former managers, phony reference letters on counterfeit letterheads, and so on. Combined with a polished resume (often written by AI to match job postings exactly), it becomes a layered illusion. One hiring manager observed that AI-enabled candidates often have overly perfect but vague resumes and references, which fall apart under detailed questioning. This highlights why a deepfake hire can be so hard to spot – every piece of the identity supports the lie, unless you look very closely.

Taken together, these tactics blend document fraud, biometric manipulation, synthetic identity creation, and social engineering into a single attack. A deepfake applicant will look qualified on paper, sound competent in an interview, and present valid “ID” documents – checking all the traditional boxes. To an overworked recruiter, nothing seems amiss. That’s why manual HR screening alone often fails to catch them until it’s too late. The broader impact of deep fake technology is forcing organizations to rethink hiring and verification processes.

Why Traditional Hiring Checks Are Failing

Most companies’ hiring and vetting practices were never designed to face adversarial, AI-assisted fraud. Legacy recruitment checks are struggling to detect deepfake applicants, for several reasons:

- Superficial ID Verification: Employers usually collect a copy of a driver’s license or passport as part of onboarding, but a visual inspection by HR staff isn’t enough to catch an AI-crafted fake. Modern forgeries can include flawless holograms, barcodes, and microprint. Human eyes – untrained in document forensics – often can’t discern an authentic ID from a sophisticated fake. Manual reviews also won’t reveal if an image has been digitally altered. In short, a well-made fake ID can fool standard HR checks. Traditional background screening companies may flag obviously stolen SSNs or criminal records, but if the fake identity is entirely synthetic (with no prior history), it may come back “clean,” raising no red flags.

- Video Interviews Without Liveness Checks: Many organizations treat video calls as proof that “we saw the person,” but without any liveness tests or verification, a video interview can be spoofed. Deepfake overlays and voice clones can sail through a predictable interview script. Recruiters are not trained to notice subtle artifacts like a slight lip-sync delay or synthetic skin textures on a webcam feed. And one cannot expect a busy hiring manager to ask every candidate to perform impromptu identity challenges on camera (like covering their face or moving in ways deepfakes struggle with). If the interview process has no built-in anti-spoofing measures, a determined imposter can convincingly pretend to be someone else for the duration of a Zoom call.

- Reliance on Static Background Data: Traditional background checks and references assume the identity presented is genuine. They look for criminal records, employment verification, or credit history tied to a name – but what if the name and data itself are fabricated or stolen? Many checks pull from databases that might have already been compromised. If a scammer is using stolen personal information from a real person with a clean record, the background check may report “all clear.” Or if the identity is totally synthetic (with no records at all), some systems might not flag that as suspicious by default. This “garbage in, garbage out” problem means a fake candidate can pass screening if the underlying identity data isn’t verified as real.

- Undertrained Gatekeepers: Recruiters and HR personnel are experts in talent acquisition, not fraud detection. Expecting them to spot expertly forged documents or deft deepfakes without special tools is unrealistic. Most recruiters are not trained to recognize patterns—such as irregular blinking, lighting inconsistencies, or unnatural facial gestures—that might indicate a deepfake. As one security hiring manager noted, recruiters have been conditioned to “pitch” jobs to candidates in a tight labor market, focusing on wooing talent rather than scrutinizing for deception. With speed and efficiency as priorities, many teams may miss warning signs like inconsistencies in a candidate’s story or hesitance to meet on video. Hiring staff are not forensic analysts, and without training or technology, they’re outmatched by AI-enhanced fraudsters, making it extremely difficult to spot deepfakes during the hiring process.

- Scalability of AI Fraud: Perhaps the biggest shift is how scalable and repeatable these attacks have become thanks to AI. What used to require a conspiracy and substantial effort – forging documents, stealing identities, coaching an imposter through interviews – can now be automated and multiplied. A single person (or small group) can deploy dozens of polished fake applications using chatbots to customize resumes, deepfake tools for faces/voices, and readily available fake documents. If even one out of 50 attempts succeeds, the payoff can be huge. This scale means the odds of a fake slipping through are higher than ever, especially in high-volume hiring where recruiters are quickly filtering many applicants. Simply put, AI lowers the cost of fraud and raises the burden on defenders.

All these factors point to a necessary mindset shift: it’s no longer safe to “trust but verify” during hiring. The new mantra must be “verify before trust.” Before a new hire is brought onboard and granted access, companies need stronger assurances of identity authenticity. The old assumption that a job candidate is who they say they are – barring obvious red flags – doesn’t hold up in the deepfake era. Without specialized tools or training, HR staff face significant challenges to spot deepfakes or recognize subtle patterns that signal manipulated content. As we’ll see next, the risks of getting it wrong go far beyond a bad hire; they can be downright catastrophic.

The Business Risks of Hiring a Fake Employee

Bringing a deepfake or otherwise fraudulent employee into your organization isn’t just an embarrassing mistake – it can pose serious security and compliance risks. Some of the potential consequences include:

- Intellectual Property Theft: Once inside, a fake employee can siphon off sensitive data and trade secrets. Imagine a malicious hire who quietly accesses source code repositories, product designs, customer databases, or R&D reports. In several documented cases, imposters did exactly this – stealing proprietary data and even threatening to leak it for extortion. For tech companies, the damage of IP theft can run into millions of dollars and erode competitive advantage overnight.

- Insider Threat and Sabotage: A deepfake hire is essentially an infiltrator behind enemy lines. They might introduce malware, create backdoors in systems, or deliberately undermine security from within. In one incident, a fake software engineer hired at a cybersecurity firm began loading malware onto company devicesshortly after onboarding. By the time the deception was uncovered, significant harm could have been done. Unlike external hackers, an employee has legitimate credentials and trust, making their attacks harder to detect as malicious.

- Fraud and Financial Losses: Rogue employees can exploit internal processes for financial gain. This could be as direct as diverting payments or as subtle as manipulating payroll or expenses. For example, an imposter might create fake vendor accounts to siphon payments, or add phantom employees (“ghost employees”) to the payroll. If the fake identity was used to bypass screening in a financial services firm, there’s risk of that person facilitating money laundering or fraud from the inside. The cost of such fraud can easily dwarf the salary that snuck out the door – we’re talking potential legal liabilities, customer reimbursements, and fines.

- Regulatory and Legal Non-Compliance: Hiring someone under false pretenses can land a company in hot water with regulators. Certain industries (finance, healthcare, government contractors, etc.) require thorough employee vetting. If a deepfake operative bypasses those and something goes wrong, the company could face investigations for insufficient controls. Even more stark is the risk of sanctions violations: The U.S. Department of Justice recently highlighted schemes where North Korean IT workers, posing as others, got hired at U.S. firms – thereby funneling money to a sanctioned regime. Companies caught unwittingly paying sanctioned individuals (due to fake identities) can suffer severe penalties. The bottom line: “I didn’t know they were fake” is not a defense that regulators or courts will accept if due diligence was lacking.

- Privacy Breaches and Customer Data Exposure: Many deepfake hires target jobs with access to sensitive data – databases of personal information, financial records, or system admin privileges. If they get in, it’s like handing the keys to an attacker. They could steal large troves of customer PII, leading to data breaches that violate privacy laws (GDPR, HIPAA, etc.) and trigger breach notification requirements. The breach costs (forensics, credit monitoring, fines) and reputational damage can be devastating. Ironically, a fake employee might even pass a company’s customer KYC (Know Your Customer) info to criminal partners, undermining the very compliance efforts meant to catch fraud.

- Reputational Damage and Erosion of Trust: Should it come to light that your organization fell victim to a deepfake hiring scam, the public fallout could be significant. Incidents involving deepfake job applicants have raised concerns among industry leaders and regulators about the broader risks to business integrity and security. Clients, partners, and shareholders may lose confidence in your controls, and negative shifts in public opinion can further damage your company’s reputation. Existing employees might feel unsafe or mistrust leadership (“How could we let an imposter in?”). The incident could attract negative media attention – “Company X Unwittingly Hires Fake Worker from Abroad” – which can linger on the internet forever. Trust, once lost, is hard to regain, and in fields like fintech or defense, reputation is everything.

In short, a fake employee isn’t just a bad hire; it’s a foothold for adversaries with potential impacts spanning cybersecurity, finance, legal compliance, and brand integrity. This is why forward-thinking companies treat workforce identity verification as mission-critical, not merely an HR formality. The stakes are comparable to a major data breach or a compliance failure – because it is a form of breach when an impostor infiltrates your ranks. Next, we’ll discuss why addressing this threat requires more than just HR’s involvement.

Deepfake Hiring Fraud Is a Compliance Issue – Not Just an HR Issue

Traditionally, vetting employees has been seen as HR’s domain (with maybe a bit of IT input for account setup). But given the risks outlined, deepfake hiring fraud has to be recognized as a wider business and compliance issue. In other words, knowing your employees is as crucial as knowing your customers. Here’s why this shift in thinking is happening:

Enterprise Risk and “Know Your Employee (KYE)” – Companies in finance and other sectors have long practiced KYC (Know Your Customer) to verify client identities and comply with anti-fraud and AML (Anti-Money Laundering) rules. Now, a similar rigor is expected internally with what some call “Know Your Employee.” Regulators and industry standards are evolving to hold businesses accountable for who they let into secure systems. If you must vet a customer opening a bank account to prevent criminal access, shouldn’t you equally vet an IT admin being hired who will have access to thousands of those accounts? Forward-looking compliance frameworks (including some new guidelines from governments) suggest that workforce onboarding should include identity assurance analogous to KYC. This means verifying that an employee or contractor is who they claim, is legally employable, and isn’t a known bad actor. It’s becoming part of enterprise risk management, not just an HR checklist.

Regulatory Expectations and Liability – In critical industries, regulators are starting to question: “How do you know Bob in accounting is really Bob?” Especially for remote hires from abroad, there’s an expectation of due diligence to prevent unwitting security threats or sanctions violations. In fact, U.S. authorities (FBI, DOJ, State Department) put out a joint advisory warning that North Korean IT operatives were fraudulently getting remote jobs – and urged companies to be vigilant in vetting to avoid sanctions breaches. Failing to catch a fake could not only lead to internal damage but also expose the company to fines or legal action for compliance failures. Workforce identity verification is therefore climbing onto the agenda of compliance officers and CISOs, not just HR directors.

Insider Threats and Zero Trust – Modern cybersecurity models like Zero Trust assume no user or device should be inherently trusted, whether outside or inside the network. However, if a fake employee is hired, that model is undermined from the start – you’ve granted insider access to an adversary. Security teams are learning they need to extend their thinking to the hiring pipeline. An NSA/CISA bulletin in 2023 emphasized planning for synthetic media and deepfake social engineering attempts as an organizational threat, recommending verification and training rather than assuming you can perfectly detect every fake. In practice, that means security should collaborate with HR on pre-employment identity checks and post-employment monitoring (especially for privileged roles). The insider threat paradigm now includes “synthetic insiders” created through AI. Managing this risk isn’t just an HR background-check task; it ties directly into cybersecurity and compliance monitoring.

Ultimately, addressing deepfake hires requires a multidisciplinary approach. HR alone can’t reasonably be expected to fend off AI-backed fraud. It takes IT and security teams deploying verification tech, compliance teams updating policies, and training across departments so that everyone knows this is a real risk. The encouraging news is that solutions exist – and aligning hiring practices with security best practices can shut down fake candidates before they get on the payroll. The next section looks at some of those solutions in detail.

How Companies Can Detect and Prevent Deepfake Job Applicants

Defending against deepfake applicants calls for adding layers of verification to your hiring and onboarding workflows. The goal is to block fraudulent candidates at multiple checkpoints – ideally before they’re hired, or at worst, before they can access sensitive systems. Here are practical strategies organizations are using to detect and prevent fake candidates:

- Biometric Identity Verification with Liveness Detection: One of the most powerful tools is to verify a candidate’s physical presence through biometrics. This means going beyond a simple video call. For critical roles or fully remote hires, consider requiring a live facial verification step – the candidate is asked to scan their face using a secure app or during a video chat, and that face is matched to their photo ID on file. Crucially, incorporate liveness detection, where the system asks the user to perform certain movements or uses AI to ensure the face is real and not an on-screen deepfake. Modern biometric verification can catch subtle cues (texture of skin, unpredictable movements) to confirm there’s a live person rather than a spoof. Some companies now mandate an in-person video call with spontaneous requests (e.g. “turn your head to the side,” “blink twice”) or even a brief in-person meeting after a remote hire as a final check. These steps can thwart deepfakes – as one expert noted, introducing “controlled unpredictability” in interviews forces a live human to react, making it hard for pre-recorded or AI-driven avatars to keep up. In short, verify the person, not just their documents.

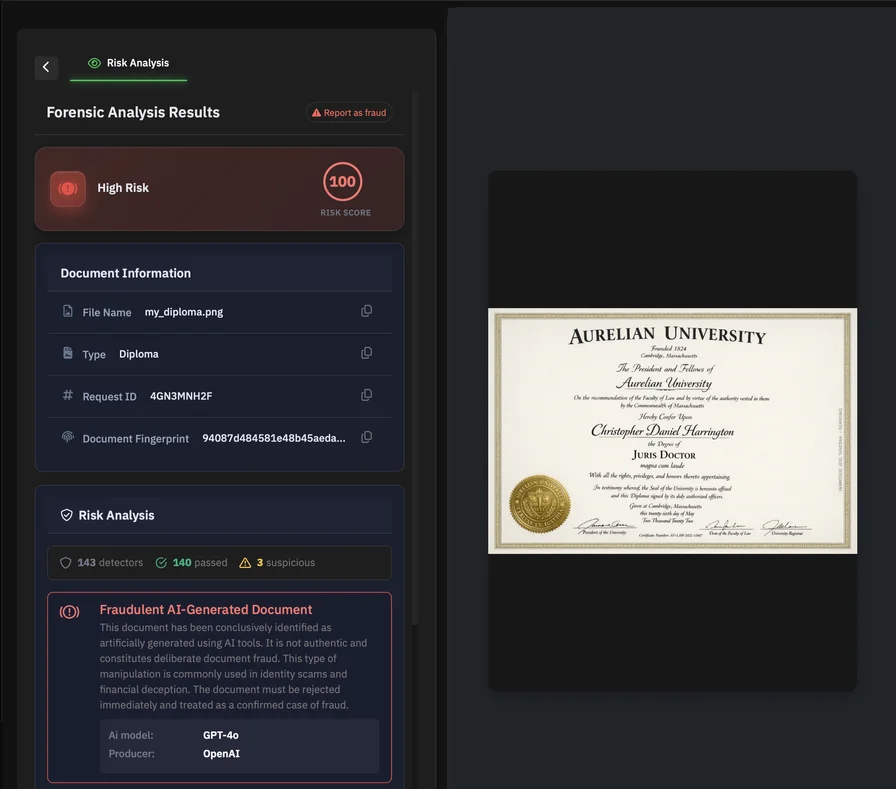

The UI of Bynns Document Forensic Analyst. AI-Powered Document Authentication: Since fake IDs and documents are a cornerstone of these scams, deploying advanced document verification is essential. Do not rely on an HR assistant’s eye to catch a forged ID or falsified certificate. Instead, use AI-driven document analysis tools to validate identity documents, resumes, and other credentials. These systems can automatically check if an ID’s security features (holograms, fonts, barcodes, etc.) are consistent with a real one, and whether any elements have been digitally altered. They also analyze metadata in files and look for signs of manipulation that a human would miss (for example, editing artifacts in a PDF or mismatched shadows in a scanned image). Such technology can flag an ID that’s too perfect an obviously doctored pay stub within seconds. For instance, PDFchecker – an AI-powered document fraud detection service – can instantly analyze uploaded documents to detect forgeries or AI-generated fakes invisible to the naked eye. These kinds of tools give HR an upper hand, automatically rejecting bogus documents before a fake candidate slips through. It’s an application of “AI vs AI”: using advanced algorithms to catch the artifacts left by generative AI forgeries. Other techniques, such as blockchain verification, digital signing, and physical examination, can further enhance the detection of deepfake job applicants by providing additional layers of media authenticity and verification beyond basic detection methods.

- Cross-Database Identity Validation: Another safeguard is to cross-verify applicant information against trusted databases and records. If someone claims a degree or past employment, don’t just accept a PDF diploma or reference letter – check with the issuing institution or use third-party databases. Likewise, verify the candidate’s government ID details (such as social security number or national ID) through official channels or credit bureaus to ensure the identity is legitimate. Many fake identities will fail when details are cross-checked– for example, the name might not match any records, or the SSN was never issued (or belongs to someone deceased). Some companies are now integrating with national ID registries or using services that can validate if an ID number or passport is real and tied to the person presenting it. Also, perform a basic sanity scan of their work history: ensure the companies listed actually exist and the roles make sense. Scammers often rely on the assumption that no one will look too closely at that “Marketing Manager at XYZ Corp” from five years ago. By integrating these cross-checks (some of which can be automated via APIs), you add an extra hurdle that synthetic identities struggle to clear.

- Continuous Identity and Behavior Monitoring: Verification shouldn’t stop after the offer letter is signed. Particularly for high-risk positions (those with access to sensitive data or finances), it’s wise to implement heightened monitoring during the initial employment period. This doesn’t mean spying on employees, but rather using security analytics to watch for anomalous behavior that could indicate the person isn’t who they claimed to be or has malicious intent. For example, set alerts for unusual access patterns: a new hire downloading an atypically large volume of data, logging in at odd hours consistently via VPN, or accessing systems unrelated to their job duties. Also, verify that they complete any deferred in-person ID checks (e.g. present in person to pick up equipment or ID badge) – failure to do so could be a red flag. The principle here is similar to the “zero trust” model in cybersecurity: don’t fully trust a new employee’s identity just because they passed initial screening. Implement a probationary period of closer observation. Many companies already do 90-day performance probations; add an “identity probation” as well. Early detection of inconsistencies can prevent a long-term insider threat. If something doesn’t add up, investigate promptly – it’s far easier to handle before the person has entrenched access.

- Secure, Layered Onboarding Workflows: Redesign your onboarding to include multiple verification stepsbefore granting full access to critical systems. Instead of issuing laptop, VPN credentials, and data access on Day 1 automatically, consider a phased approach. For remote hires, one effective tactic is to require a face-to-face verification at a certain point – for example, the new hire must appear on a video call with HR to activate their network access, or pick up equipment in person at a designated location with ID in hand. This single in-person checkpoint can break the scheme of a fraudster operating from afar under a fake identity. Additionally, integrate tech like one-time password delivery to a verified phone number or physical address verification (sending a secure letter that the person must respond to) as part of onboarding for sensitive roles. And ensure no single check is considered infallible; use a combo of document verification, biometric checks, and third-party data validation. By layering these defenses, you force an imposter to beat multiple systems – greatly reducing the odds of success. In practice, a real candidate will find the process only slightly more cumbersome (and will appreciate the security), whereas a fake will likely stumble or opt to target an easier victim elsewhere.

Implementing these measures may introduce a bit more friction into hiring, but that friction is precisely what’s needed to flush out fakes. As an added benefit, these practices also deter traditional resume fraud and ensure honest candidates are thoroughly vetted. The key is to embed identity verification into the hiring pipeline in a way that is systematic and as automated as possible, so it doesn’t overly burden recruiters. Companies like PDFchecker are innovating in this space by offering integrated KYC/KYB-style verification tailored for HR workflows – essentially bringing bank-grade identity proofing into the hiring process. By adopting such tools and practices, organizations can significantly raise the barrier for any deepfake applicant trying to con their way in.

The Future of Secure Hiring in an AI-Driven World

As AI technology continues to advance, the cat-and-mouse game between fraudsters and defenders will also escalate. We can expect that deepfake tools will get even cheaper, more accessible, and harder to detect in the coming years. High-profile deepfake videos featuring public figures like Barack Obama—such as the 'Synthesizing Obama' project demonstrating realistic facial animation—and Donald Trump, which have been used to spread misinformation and raise concerns about election manipulation, highlight just how convincing and potentially damaging deepfake technology has become. This underscores the risks for hiring processes, where similar techniques could be used to impersonate job applicants. What does this mean for the future of hiring?

First, businesses should recognize that AI-enabled identity fraud is here to stay – and likely to increase. The projections are sobering: by 2025, as many as 8 million deepfakes may be circulating online (up from just 500,000 a couple years prior), thanks to the explosion of generative AI content creation. With every improvement in AI realism – crisper video quality, more human-like voices, more authentic fake documents – the bar for detection rises. Even amateur scammers will be able to produce near-believable fake candidates, while advanced threat actors (including hostile nation-states or organized crime rings) will deploy highly customized personas to target specific companies. Hiring fraud could evolve into an industrialized practice, with dark web services offering “complete fake employee packages” for a fee. HR teams must plan with the assumption that some portion of applicants, especially for remote and lucrative roles, will be fraudulent.

Given this reality, hiring processes must evolve and incorporate robust identity assurance as a standard feature. Just as IT security had to shift to a “zero trust” mindset, HR and recruiting will need to embrace a posture of zero trust hiring – verify everything that can be verified about an applicant. This doesn’t mean making candidates jump through impossible hoops, but rather upgrading the process with smart, AI-enabled verifications (as we described above) and changing the internal culture to treat identity as fundamental. We may see new norms emerge, like routine biometric screening of candidates, blockchain-backed digital resumes with verified credentials, or government-issued digital identity certificates for job seekers. Companies might collaborate with trusted digital identity providers to pre-vet candidate identities in talent pools. In an AI-driven world, a resume or video interview alone will no longer suffice as proof of personhood.

Another likely shift will be closer collaboration between security, HR, and compliance teams. The silo between HR hiring and cybersecurity is already dissolving; expect that trend to accelerate. Many enterprises are starting to include CISOs or security officers in designing hiring workflows for high-risk roles, ensuring that things like device provisioning, account setups, and background checks are interwoven with identity verification. Training will also play a big role – recruiters will receive education on spotting potential deepfake clues, while security teams will train to respond quickly if a fake is suspected (for example, having an incident response playbook for a “suspected synthetic insider”). The organizations that adapt fastest will be those where all stakeholders acknowledge the threat and work together to fortify the hiring process from end to end.

Finally, we’ll likely see a stronger ecosystem of services and standards around workforce identity verification. Governments may introduce guidelines or even requirements for certain industries to implement “know your employee” protocols. There could be certifications for companies that verify employee identities to a high standard (much like ISO or SOC2 certifications in security). On the flip side, job applicants themselves might leverage technology to prove their own identities in a privacy-preserving way, perhaps using digital ID wallets or third-party verified career profiles – making it easier for employers to trust they are real. In essence, trust in hiring will become a two-way street, underpinned by technology: candidates proving they are genuine, and employers verifying every claim.

Conclusion: Trust Needs Verification in the AI Era

Remote hiring and distributed workforces are here to stay – and so, unfortunately, are deepfake job applicants. This emerging threat makes it clear that trust can no longer be taken for granted in the hiring process. Companies must adapt by infusing verification into every stage of onboarding, just as they have done for customer onboarding in the past. The same rigor that goes into vetting a new client under KYC rules should apply to new employees and contractors. It’s time to stop thinking of employee screening as a back-office formality and start treating it as a frontline defense against cyber infiltration.

In practical terms, this means building resilient, AI-aware hiring systems. Verify identities using multiple signals – documents, biometrics, databases – and treat anomalies with healthy suspicion. Foster a culture where recruiters feel empowered to pause or escalate a hire if something doesn’t feel right, rather than rushing to fill a seat. Embrace technologies like document forensics and liveness checks that can outmaneuver deepfake techniques. And remember that verification is not a one-off event: maintaining vigilance through the early tenure of an employee can catch any wolf in sheep’s clothing before serious damage is done.

Above all, organizations should recognize that the cost of implementing these protections is far less than the cost of a successful deepfake breach. Every business invests in securing their networks and customer data; now they must invest in securing their hiring pipeline with equal fervor. In an AI era where seeing is not always believing, the old adage holds truer than ever: trust, but verify – and when it comes to new hires, maybe don’t trust at all until verification is complete. By weaving robust identity verification and fraud detection into hiring, companies can continue to leverage the benefits of global remote talent without opening the door to imposters. The stakes are high, but with vigilance and the right tools, businesses can stay one step ahead of fake employees and ensure that only real, qualified, and trustworthy people wear the company badge.

Remote Work: The Perfect Storm for Deepfake Job Applicants

The rapid shift to remote work has created an ideal environment for deepfake job applicants to thrive, fundamentally changing the landscape of the hiring process. With face-to-face interviews replaced by video calls and digital onboarding, companies are more vulnerable than ever to fake job applicants leveraging deepfake technology. Today’s artificial intelligence tools can create personalized videos, fake images, and even entire synthetic personas in just a few seconds, making it increasingly difficult for employers to distinguish between real and fake candidates.

Deepfake videos and face swapping technology, powered by advanced neural networks and generative adversarial networks (GANs), have reached a level of sophistication where even experienced hiring managers can be fooled. Fake job applicants can now participate in video interviews using AI-generated avatars that mimic real people, complete with realistic facial expressions and voice cloning. These AI tools can create personalized videos that match a candidate’s supposed identity, making the hiring process a prime target for fraud. The result is a new breed of deepfake job candidate who can slip through traditional background checks and onboarding procedures, potentially gaining access to sensitive company data or even installing malware on corporate devices.

The risks don’t stop at hiring fraud. The same deepfake technology used to create fake job applicants can also be weaponized to produce deepfake pornography—fake pornographic videos featuring real people’s faces. This not only raises concerns about privacy and consent but can also damage a company’s reputation and create a hostile work environment if such content is used for harassment or blackmail. Employers must remain vigilant, as the proliferation of fake content can have far-reaching consequences for both individuals and organizations.

Detecting deepfakes in the hiring process requires a multi-layered approach. While background checks and voice authentication can help verify a candidate’s identity, they are not foolproof. Employers should look for telltale signs of deepfakes during video interviews, such as unnatural eye movement, mismatched audio and video, or subtle inconsistencies in facial expressions. Advanced deepfake detection methods, including deep learning algorithms and face swapping detection tools, are becoming essential for companies that want to combat deepfakes and protect their hiring process.

In the next three years, the use of deepfake technology in remote jobs is expected to increase, making it critical for employers to stay ahead of the curve. Companies like Google and Facebook are already investing in AI tools to detect and flag fake videos and images, setting an example for others to follow. Employers should consider adopting similar technologies, collaborating with law enforcement, and sharing best practices to strengthen their defenses against deepfake-related hiring fraud.

Ultimately, the rise of remote work has created a perfect storm for deepfake job applicants. To protect their business and ensure the integrity of the hiring process, employers must educate themselves and their teams about the risks, implement advanced detection methods, and develop robust practices for verifying candidate identities. By remaining vigilant and embracing new technologies, companies can safeguard their workforce against the growing threat of deepfakes and maintain trust in an increasingly digital hiring landscape.

Möchten Sie mehr erfahren?

Entdecken Sie unsere anderen Artikel zur Dokumentensicherheit und Betrugsprävention.

Alle Artikel durchsuchen